Prompt Compression: Enhancing Inference and Efficiency with LLMLingua - Goglides Dev 🌱

By A Mystery Man Writer

Description

Let's start with a fundamental concept and then dive deep into the project: What is Prompt Tagged with promptcompression, llmlingua, rag, llamaindex.

Reduce Latency of Azure OpenAI GPT Models through Prompt Compression Technique, by Manoranjan Rajguru, Mar, 2024

PDF) Prompt Compression and Contrastive Conditioning for Controllability and Toxicity Reduction in Language Models

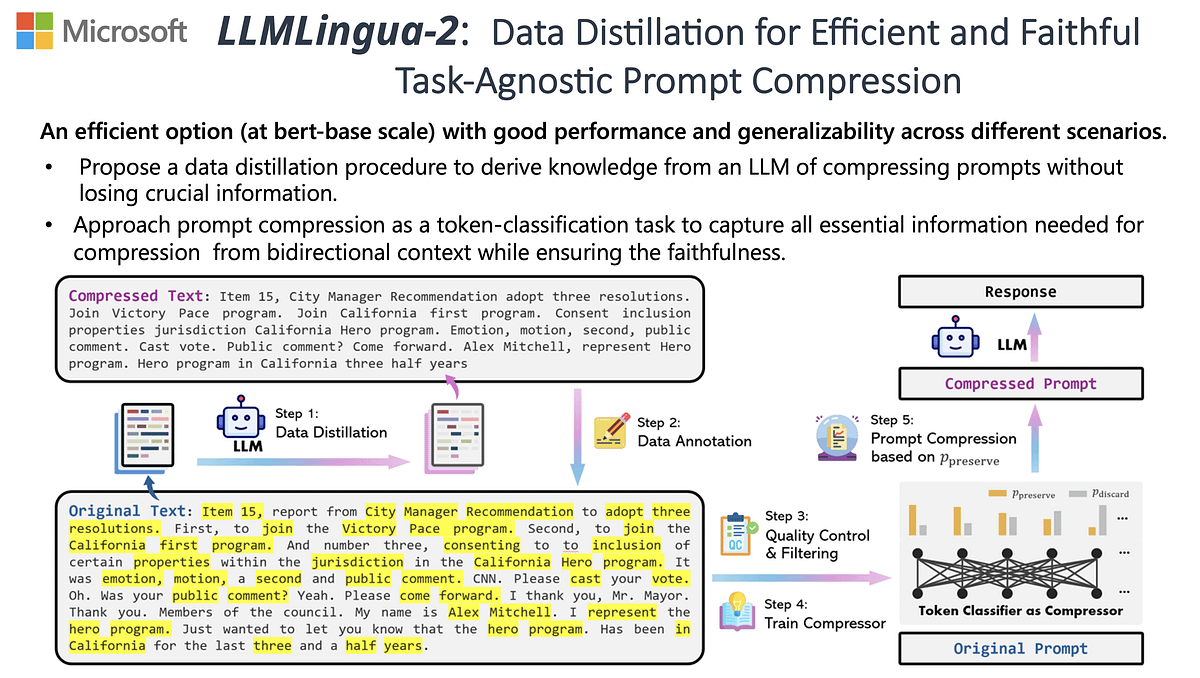

LLMLingua: Innovating LLM efficiency with prompt compression - Microsoft Research

LLMLingua: Innovating LLM efficiency with prompt compression - Microsoft Research

Goglides Dev 🌱 - Latest posts

PDF] Prompt Compression and Contrastive Conditioning for Controllability and Toxicity Reduction in Language Models

PDF] Prompt Compression and Contrastive Conditioning for Controllability and Toxicity Reduction in Language Models

Goglides Dev 🌱 - All posts

LLMLingua: Revolutionizing LLM Inference Performance through 20X Prompt Compression

Top 15 Most Influential Tech Leaders to Follow in 2024 - Goglides Dev 🌱

from

per adult (price varies by group size)