Language models might be able to self-correct biases—if you ask them

By A Mystery Man Writer

Description

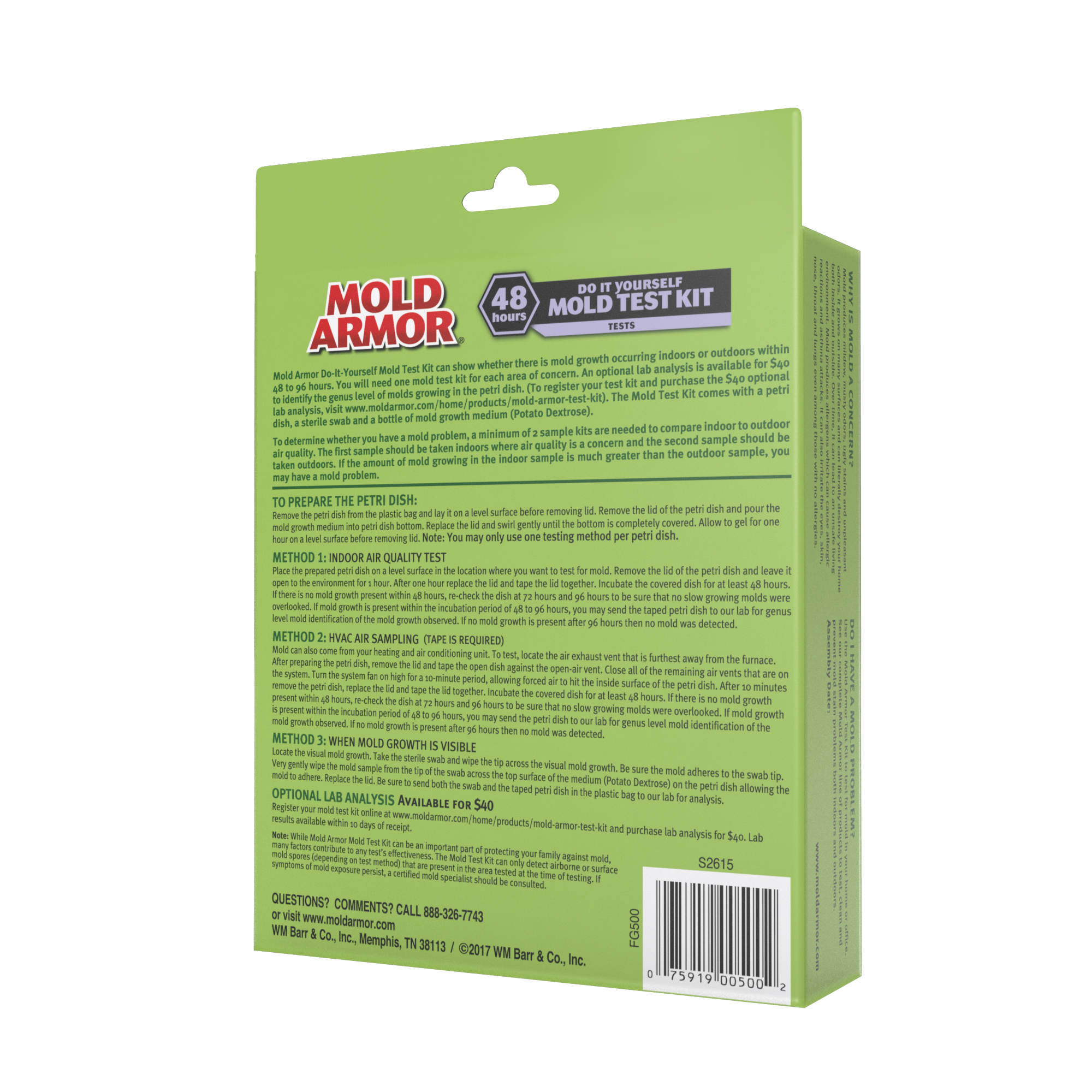

A study from AI lab Anthropic shows how simple natural-language instructions can steer large language models to produce less toxic content.

Bryan Ackermann on LinkedIn: Korn Ferry and Yoodli Partner to

Bryan Ackermann on LinkedIn: Watch Briefings Podcast now!

Even ChatGPT Says ChatGPT Is Racially Biased

Harm Ellens on LinkedIn: Assessing Political Bias in Language Models

Darren Tjan on LinkedIn: Language models might be able to self

Articles by Deborah Raji

Bryan Ackermann on LinkedIn: Accelerating Culture Change through

Philosophers on Next-Generation Large Language Models - Daily Nous

Scientific method - Wikipedia

Articles by A.W. Ohlheiser

Understanding Bias and Fairness in AI Systems, by Mary Reagan PhD

Language models might be able to self-correct biases—if you ask

from

per adult (price varies by group size)