MPT-30B: Raising the bar for open-source foundation models

By A Mystery Man Writer

Description

R] New Open Source LLM: GOAT-7B (SOTA among the 7B models) : r

.png)

New in Composer 0.12: Mid-Epoch Resumption with MosaicML Streaming, CometML ImageVisualizer, HuggingFace Model and Tokenizer

.png)

Train Faster & Cheaper on AWS with MosaicML Composer

Lightweight, Fast & Reliable Key/value Storage Engine Based on Bitcask

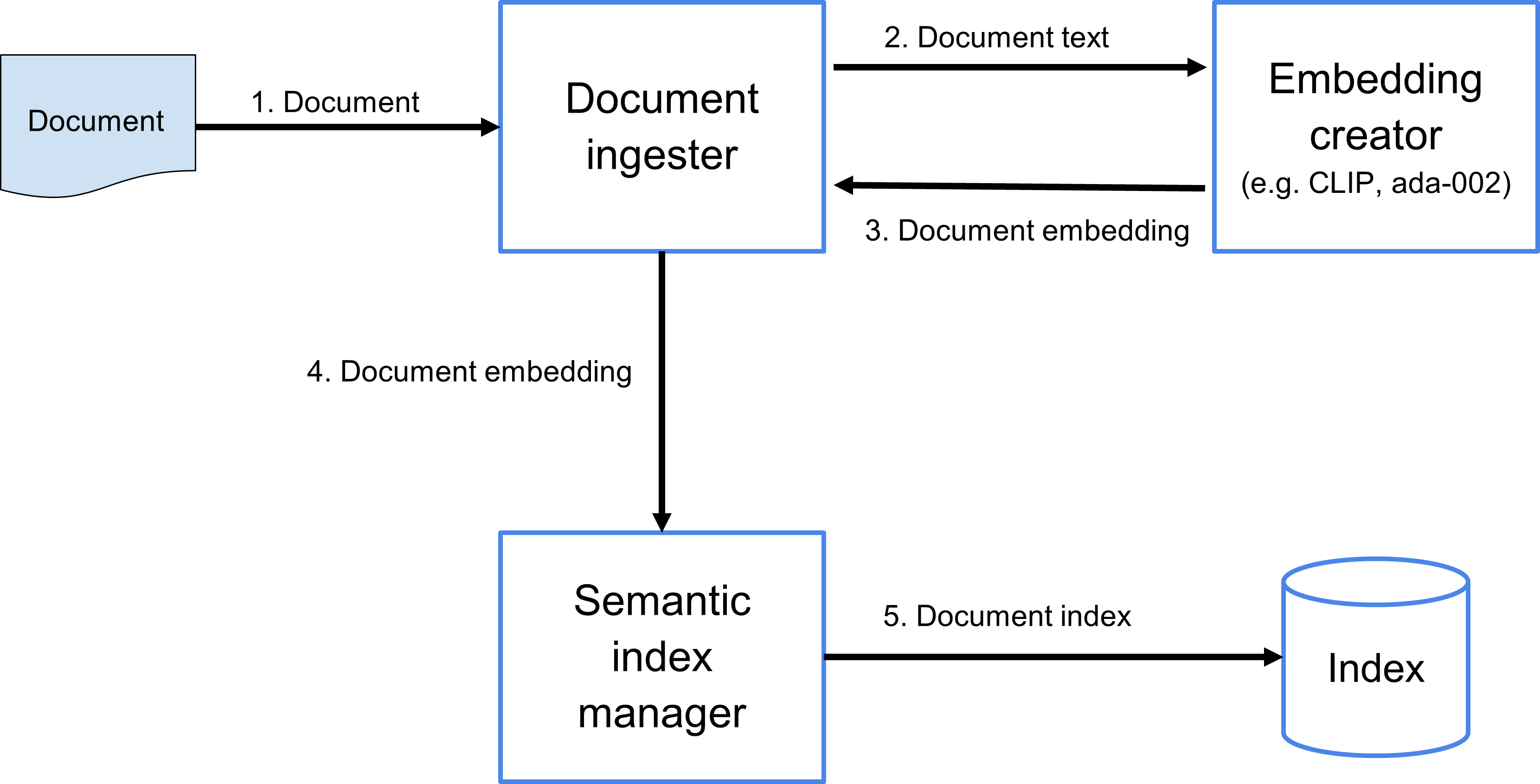

The Code4Lib Journal – Searching for Meaning Rather Than Keywords and Returning Answers Rather Than Links

Announcing MPT-7B-8K: 8K Context Length for Document Understanding

Twelve Labs: Customer Spotlight

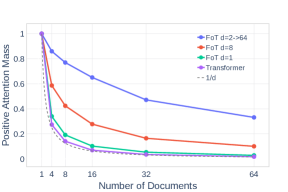

2307.03170] Focused Transformer: Contrastive Training for Context Scaling

Can large language models reason about medical questions? - ScienceDirect

Survival of the Fittest: Compact Generative AI Models Are the

Train Faster & Cheaper on AWS with MosaicML Composer

from

per adult (price varies by group size)

/i.s3.glbimg.com/v1/AUTH_bc8228b6673f488aa253bbcb03c80ec5/internal_photos/bs/2023/1/J/4iX9GlRFOLEgTpOe7Lmg/whatsapp-image-2023-07-22-at-17.13.47.jpeg)