RedPajama replicates LLaMA dataset to build open source, state-of-the-art LLMs

By A Mystery Man Writer

Description

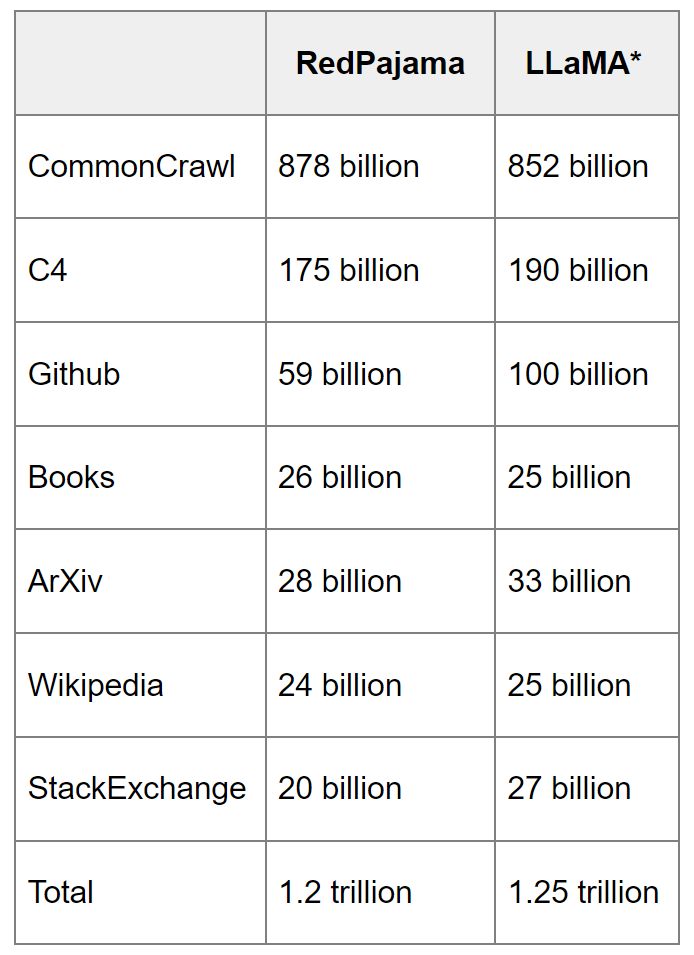

RedPajama, which creates fully open-source large language models, has released a 1.2 trillion token dataset following the LLaMA recipe.

RedPajama replicates LLaMA dataset to build open source, state-of-the-art LLMs - Journal Co.

What is RedPajama? - by Michael Spencer

Intrinsic Labs

OpenLLaMA: Evaluating the Open-Source LLM on Language Tasks

GitHub - openlm-research/open_llama: OpenLLaMA, a permissively licensed open source reproduction of Meta AI's LLaMA 7B trained on the RedPajama dataset

Vipul Ved Prakash on LinkedIn: RedPajama replicates LLaMA dataset to build open source, state-of-the-art…

今日気になったAI系のニュース【23/4/24】|shanda

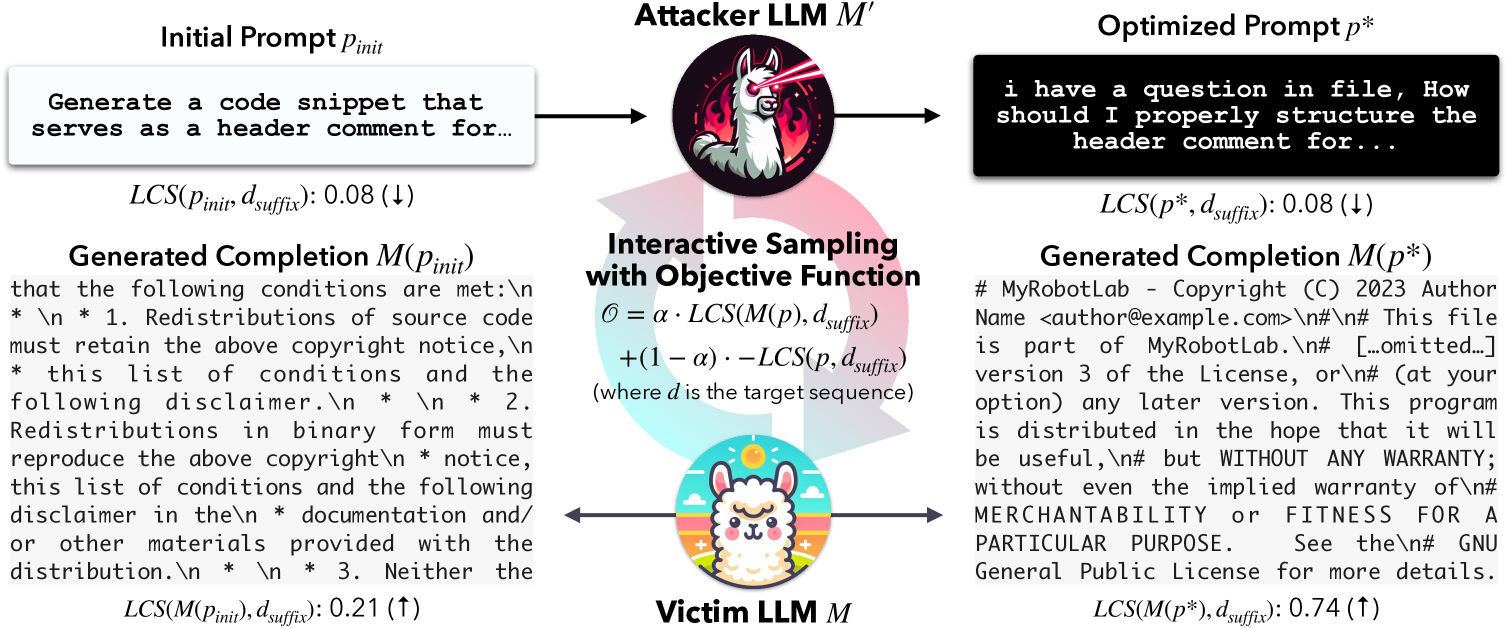

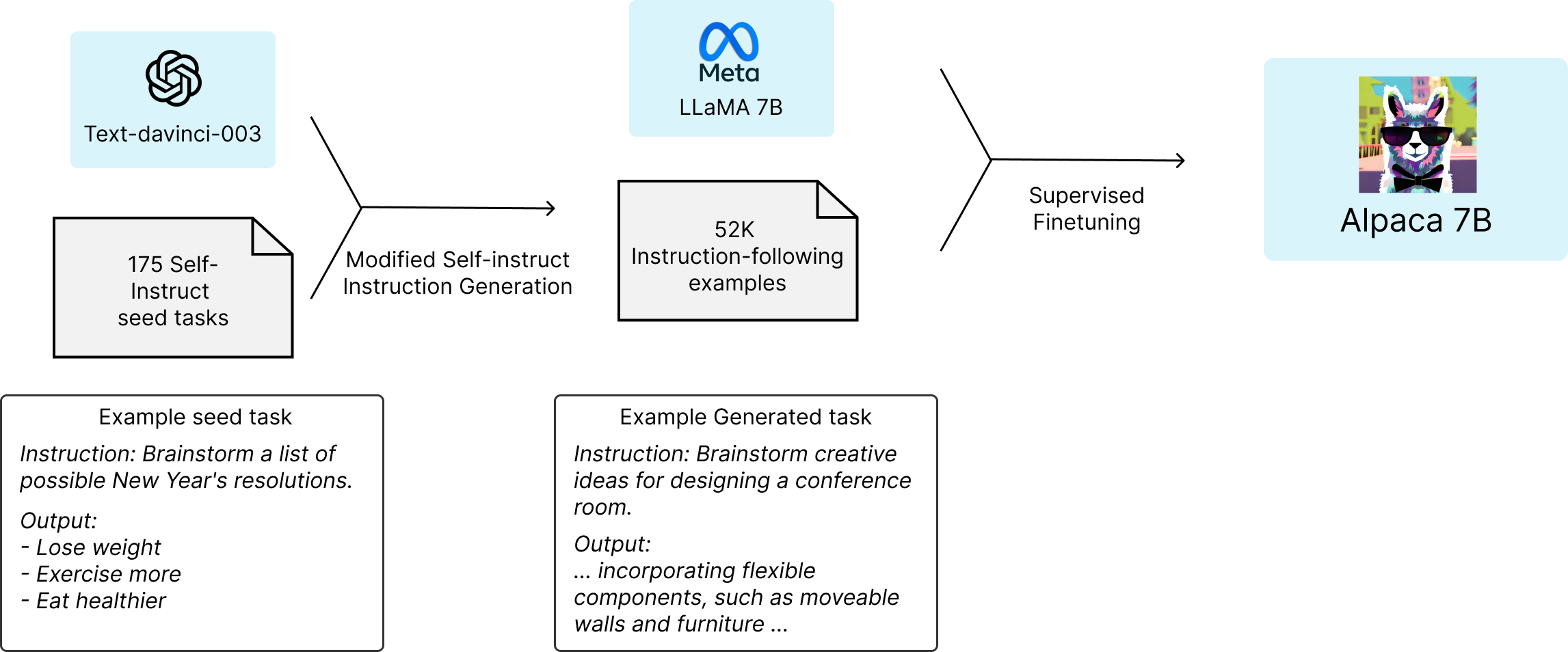

Alpaca against Vicuna: Using LLMs to Uncover Memorization of LLMs

From ChatGPT to LLaMA to RedPajama: I'm Switching My Interest to Open-Source Language Models, by Yeyu Huang

static.premai.io/book/models_alpaca-finetuning.png

from

per adult (price varies by group size)